AI is no longer one skill set.

In 2026, the term AI engineer covers very different kinds of work. Some people want to build AI features into applications. Some want to move fully into AI engineering and understand modern models deeply. Some want to master the full lifecycle of large language models. Others want to design, secure, deploy and scale production AI systems across cloud and enterprise environments.

This is where many learners get stuck.

They mix together Python, machine learning, RAG, agents, fine-tuning, serving and cloud deployment without understanding which skills belong to which path. The result is confusion, shallow learning and very few real systems.

A better approach is to choose the path that matches your goal.

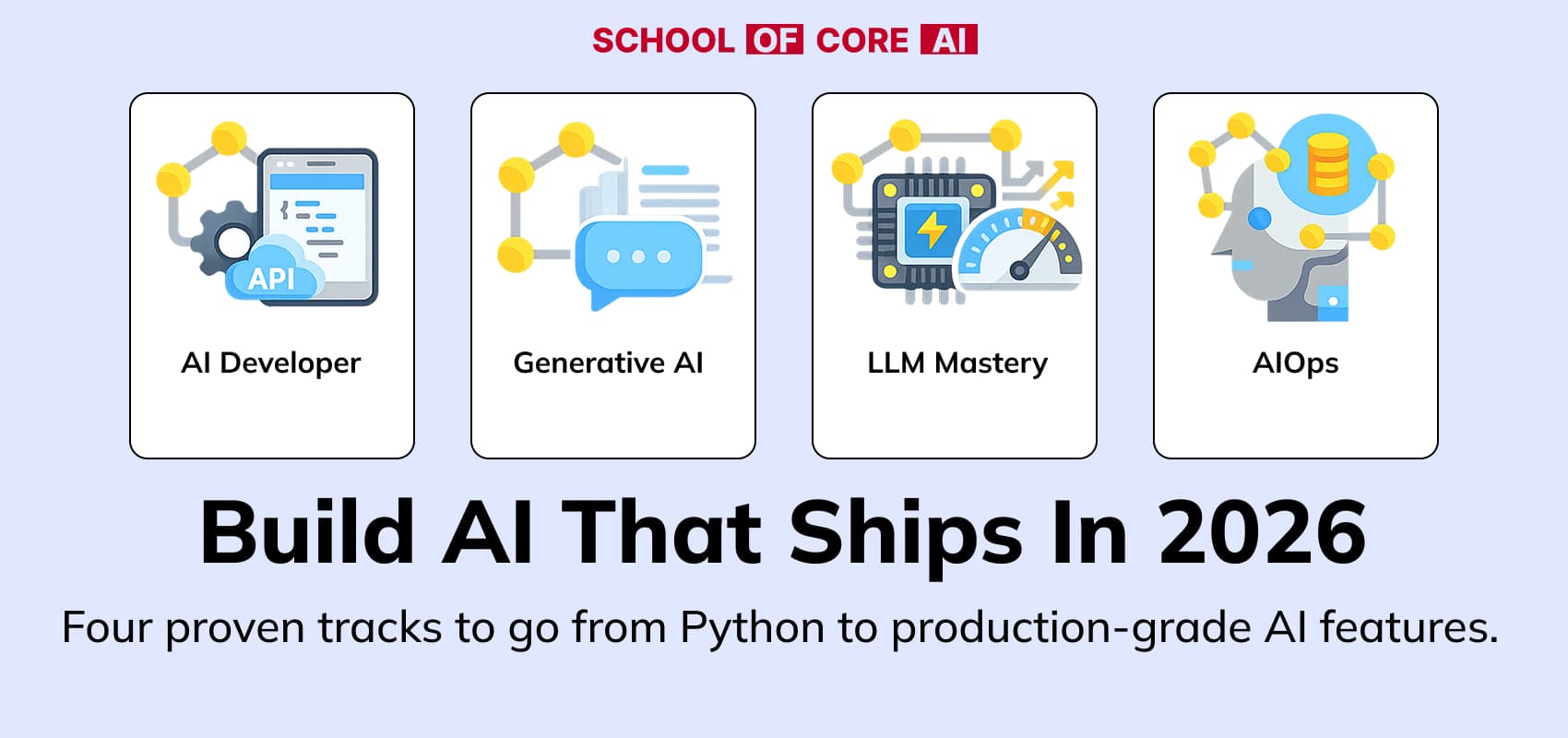

This roadmap breaks AI engineering into four practical tracks:

Each path solves a different problem. Each path builds a different kind of engineer.

Why AI Engineering Needs Different Tracks in 2026

The AI ecosystem has expanded quickly. Building with AI now involves multiple layers of knowledge:

- At one layer, you may only need enough understanding to integrate an LLM into a product, wrap it in an API and ship a working user facing feature.

- At another layer, you may need deeper model understanding across neural networks, transformers, vision language models, diffusion models, fine tuning methods, retrieval systems and modern agent frameworks.

- At a more specialized level, you may want to understand the full lifecycle of LLMs, including tokenization, pretraining concepts, instruction tuning, quantization, alignment, inference systems, evaluation and optimization.

- At the highest systems layer, the challenge becomes architectural. You are no longer asking how to build one AI feature. You are asking how to deploy, secure, monitor, scale, govern and lifecycle-manage AI systems in real environments.

These are different jobs. That is why they should be treated as different tracks.

AI Developer Path

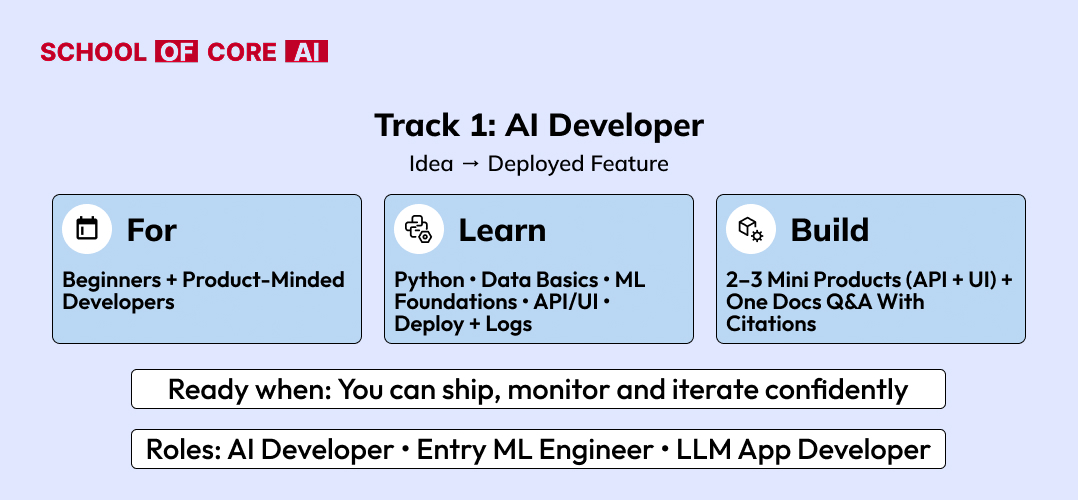

The AI Developer path is for people who want to build AI powered product features.

This path is especially relevant for software developers, full-stack engineers, backend developers and even learners from other streams who want to move into applied AI by building usable systems rather than studying theory in isolation.

The goal here is not to become a model researcher. The goal is to become someone who can take AI capabilities and turn them into product features that users can interact with.

That means learning enough to build, integrate, deploy and improve AI functionality inside applications.

Who this path is for

This path fits:

- software developers moving into AI product development

- backend or full-stack engineers who want to build AI features

- developers who already build web apps and want to add LLM workflows

- learners from non-CS or adjacent technical backgrounds who want a practical entry into AI engineering

What this path focuses on

The AI Developer path should cover the practical foundations needed to build AI features end to end:

- Python

- ML and AI basics

- Vector databases

- Generative AI basics

- Core LLM understanding

- RAG

- Agentic AI

- API integration

- simple deployment workflows

- user feedback loops and iteration

This is important because many learners try to jump directly into advanced agent frameworks or fine-tuning without first knowing how to build a stable AI application.

An AI Developer should be able to answer questions like:

How do I turn an LLM workflow into an actual user-facing feature?

How do I expose it through FastAPI or a frontend?

How do I connect retrieval, prompts, and tool calls into a usable product?

How do I improve the system after users start interacting with it?

What this role actually does

An AI Developer typically builds services using tools such as FastAPI, Streamlit or Next.js. They integrate models into applications, connect retrieval systems, build interfaces, deploy MVPs, collect feedback and ship improved versions over time.

This role is product facing. It is about turning AI capability into software.

Typical outcomes

By the end of this path, a learner should be able to build:

- AI powered app features

- document Q&A systems

- internal copilots

- chatbot interfaces

- lightweight RAG applications

- agent-based workflows connected to APIs or business logic

This is the most practical entry point for people who want to build with AI rather than just study it.

Generative AI Path

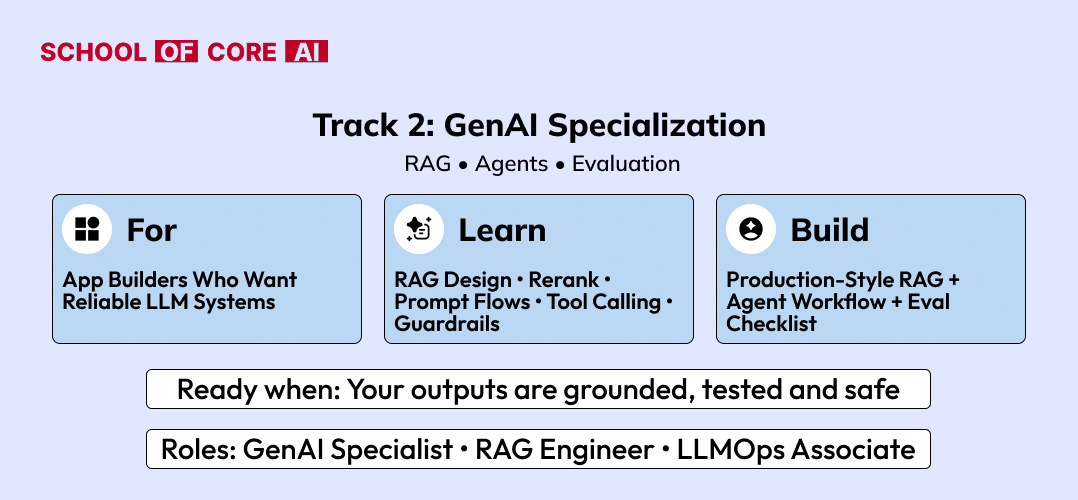

The Generative AI path is for people who want to transition seriously into AI engineering and understand the broader modern AI ecosystem in depth.

This is not just about using LLM APIs. It is about understanding the technical layers behind modern AI systems across machine learning, deep learning, neural networks, transformer architectures, generative models, multimodal systems, fine-tuning methods, retrieval workflows, agents and serving concepts.

This path is broader and deeper than the AI Developer path.

While AI Developer is about building features, the Generative AI path is about becoming technically strong across the foundational and applied layers of modern AI.

Who this path is for

This path fits:

- developers transitioning into AI engineering

- ML learners who want stronger modern GenAI depth

- engineers who want to move beyond prompting into real model and system understanding

- professionals preparing for AI engineer roles with broader technical expectations

What this path focuses on

This track should go much deeper into the full modern AI landscape:

- mathematics for ML and deep learning

- machine learning foundations

- deep learning fundamentals

- neural networks in depth

- CNNs

- RNNs

- transformers

- vision transformers

- diffusion models

- LLMs

- VLMs

- multimodal systems

- fine-tuning

- quantization

- serving basics

- RAG

- agents

- MCP and modern orchestration layers

This track matters because today’s AI engineer roles increasingly expect a broader mental model. It is no longer enough to know prompting and one framework. Engineers are expected to understand why models behave the way they do, how different architectures differ and how to choose the right system design for the problem.

What this role actually does

A Generative AI engineer builds systems that combine model understanding with application design. They work across retrieval, model usage, prompting, workflow orchestration, tool use, agents, multimodal inputs, evaluation and deployment.

They do not just call models. They design systems around them.

Typical outcomes

By the end of this path, a learner should be able to build:

- reliable RAG systems

- agentic workflows

- multimodal AI applications

- retrieval and tool calling pipelines

- Domain specific GenAI systems

- Production ready applications with evaluation and structured outputs

This path is well suited for people who want to step into AI engineer roles with broader capability than simple application integration.

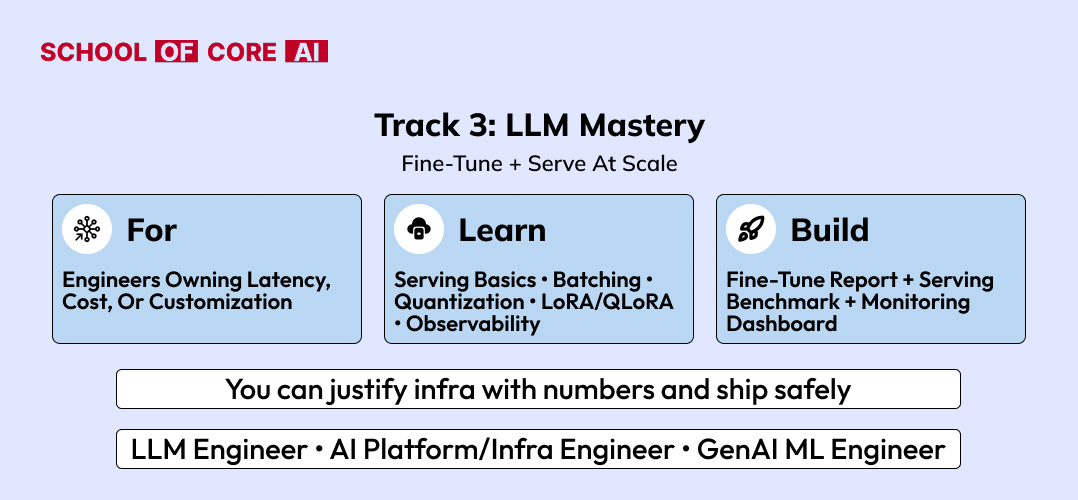

LLM Mastery Path

The LLM Mastery path is for people who want to understand the LLM lifecycle in much greater depth.

This is the right path for learners and engineers who already know the broad GenAI landscape and now want deeper command over how large language models are built, adapted, optimized, aligned, evaluated and served.

This path is more focused than Generative AI. It is not about covering the whole AI ecosystem. It is about going deeper into LLMs specifically.

Who this path is for

This path fits:

- engineers who want to specialize in LLM systems

- builders working on LLM performance and customization

- developers who want stronger understanding of fine-tuning and serving

- learners interested in the deeper lifecycle of language models

What this path focuses on

This track should go from fundamentals to advanced lifecycle understanding:

- tokenization and embeddings

- transformer internals

- pretraining concepts

- instruction tuning

- supervised fine-tuning

- PEFT

- LoRA

- QLoRA

- quantization

- inference optimization

- vLLM and TGI

- batching and scheduling

- KV-cache

- evaluation

- observability

- alignment basics

- RLHF concepts

- DPO and post-training ideas

- latency, throughput, and cost tradeoffs

- safety and guardrail considerations

This track is valuable because many people use LLMs without really understanding how model behavior changes through tuning, compression, alignment and serving choices. LLM Mastery is what closes that gap.

What this role actually does

Someone following this path works closer to the model layer. They compare serving backends, fine tune models for domain specific tasks, measure performance, optimize latency & memory, evaluate tradeoffs and build more controllable LLM systems.

They care about what happens before and after the API call.

Typical outcomes

By the end of this path, a learner should be able to build:

- fine-tuned LLM systems

- optimized inference services

- Evaluation driven LLM workflows

- quantized deployment pipelines

- observability and performance dashboards

- deeper lifecycle aware LLM applications

This is the path for people who want more than LLM usage. It is for people who want to understand LLM systems properly.

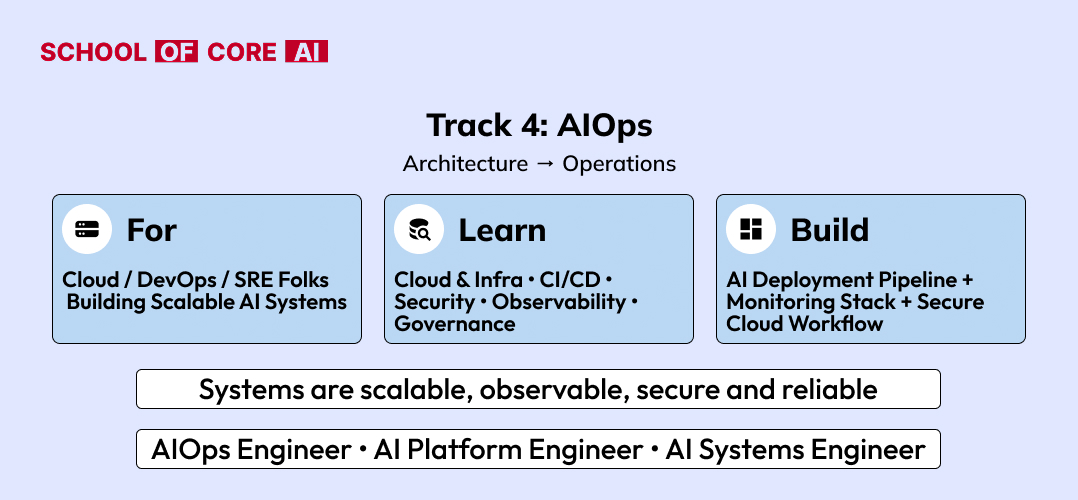

AIOps Path

The AIOps path is for people who want to think from architecture to operations.

This track is less about one model and more about how AI systems live in real organizations. It focuses on scalability, security, cloud architecture, governance, observability, deployment design, lifecycle management, and production operations.

This is where AI engineering becomes systems engineering.

Who this path is for

This path fits:

- engineers thinking at solution architecture level

- DevOps, platform, cloud and SRE professionals moving into AI systems

- senior developers who want to own deployment, lifecycle and scale

- teams building enterprise AI infrastructure

What this path focuses on

This track should include:

- scalable AI system design

- cloud deployment patterns

- infrastructure design for AI workloads

- model lifecycle management

- CI/CD for AI systems

- security for AI applications and data pipelines

- governance and access control

- observability and monitoring

- incident handling and rollback design

- cost management

- reliability engineering

- Multi environment deployment

- Compliance aware AI architecture

- production evaluation workflows

- orchestration of AI systems across services

This path matters because building an AI demo is not the same as running AI in production across teams, users, infrastructure and security boundaries.

What this role actually does

An AIOps focused engineer or architect designs AI systems that can survive real world usage. They think about deployment architecture, cloud patterns, secrets & access, latency budgets, lifecycle management, monitoring, scaling, resilience and governance.

They are responsible for making AI systems operational, secure and sustainable.

Typical outcomes

By the end of this path, a learner should be able to design and support:

- scalable AI services

- cloud-based AI deployment architectures

- enterprise-ready AI pipelines

- monitored and governed AI systems

- reliable lifecycle-managed AI platforms

- production architectures that support multiple teams and workloads

This path is ideal for engineers who want to move from building AI features to designing AI systems at organizational scale.

How These Four AI Career Paths Differ

The easiest way to understand the roadmap is to look at what each track is optimizing for.

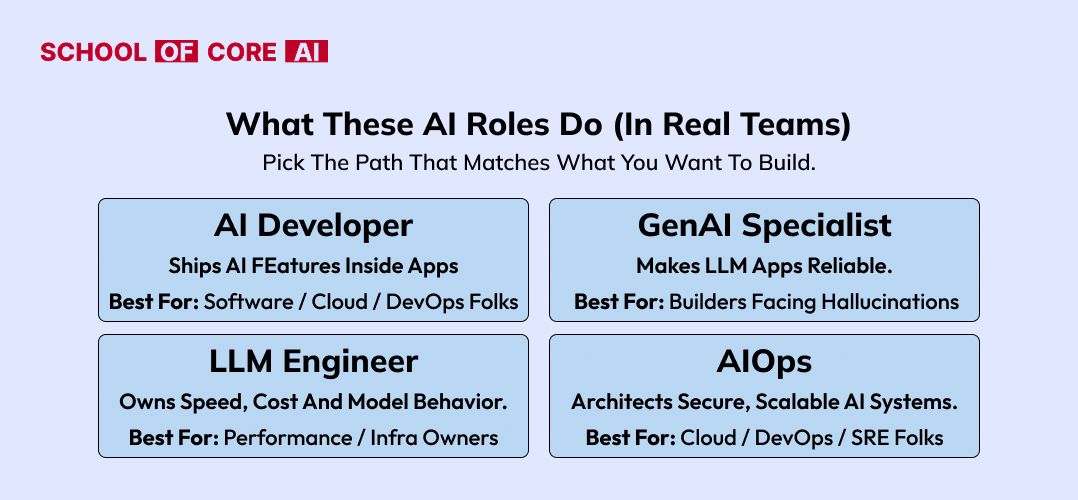

- The AI Developer path is optimizing for product implementation. It teaches how to build AI-powered features and ship them inside applications.

- The Generative AI path is optimizing for broader technical transition into AI engineering. It builds deeper understanding across modern AI systems, architectures, and workflows.

- The LLM Mastery path is optimizing for specialization in language model lifecycle, performance, and adaptation.

- The AIOps path is optimizing for architecture, deployment, scale, governance, and operations.

These tracks can connect naturally, but they should not be presented as identical.

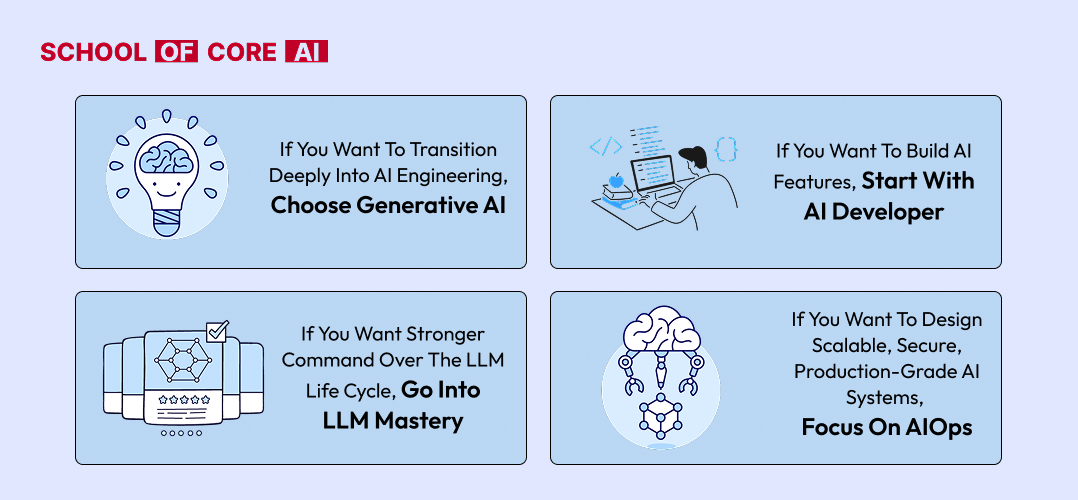

Which AI Path Should You Choose

Not everyone needs to follow all four tracks. The right order depends on your goal.

A software developer who wants to build AI features may begin with AI Developer and later move into Generative AI.

Someone who wants stronger model depth may move from Generative AI into LLM Mastery.

An engineer thinking at infrastructure or enterprise scale may move from LLM Mastery into AIOps or enter AIOps from a cloud / DevOps / platform background.

A simple progression often looks like this:

- Start with AI Developer if your goal is practical product building.

- Move into Generative AI when you want broader AI engineering depth.

- Go into LLM Mastery when you want to specialize in LLM systems.

- Choose AIOps when your focus becomes architecture, scale, cloud, security and lifecycle management.

What Recruiters Look for in AI Engineers

The market has matured. Companies no longer want learners who have only watched tutorials or copied notebooks. They want people who can demonstrate practical understanding.

- For AI Developer roles, recruiters want to see working AI features, APIs, retrieval flows, interfaces and shipped projects.

- For Generative AI roles, they want to see deeper understanding of modern AI systems, stronger reasoning around architectures and more robust project design.

- For LLM-focused roles, they want to see evidence of fine-tuning, serving, quantization, evaluation and performance awareness.

- For AIOps or architecture-focused roles, they want to see cloud thinking, lifecycle understanding, deployment maturity, monitoring and production reliability.

A strong portfolio should show:

- what problem was solved

- why a certain architecture was chosen

- how the system was evaluated

- how it was deployed or operationalized

- what tradeoffs were handled

That is what makes content credible in both human review and search visibility.

Final Thoughts on Becoming an AI Engineer in 2026

The biggest mistake in AI learning is trying to learn everything without choosing a direction.

The right path depends on what you want to build.

AI engineering in 2026 is not about collecting concepts. It is about building capability in the right layer of the stack.